AITHENA

AI-based Connected and Cooperative Automated Mobility: Trustworthy, Explainable, and Accountable

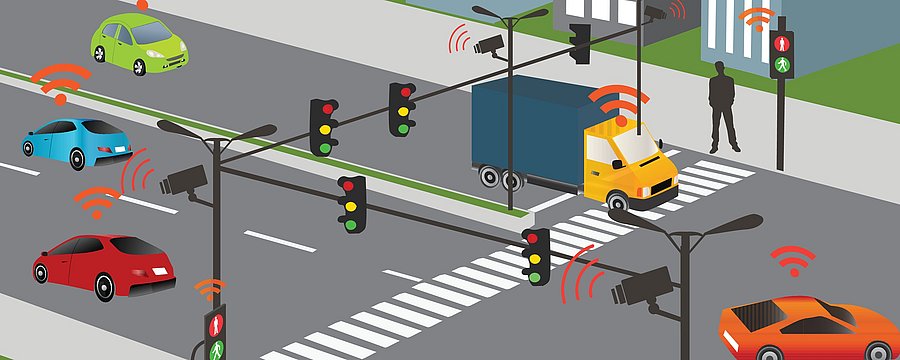

Research in connected and cooperative automated mobility (CCAM) is flourishing thanks to artificial intelligence, which has made outstanding breakthroughs with the advent of sophisticated deep learning methods and the development of autonomous driving technologies (communications, microelectronics, sensors, and infrastructures). CCAM solutions have benefited from the applicability of AI-based perception, situational awareness, and decision-making components, but their response in real-world traffic scenarios is largely unpredictable, so they are often considered black boxes by users and stakeholders due to a lack of transparency and interpretability.

The increasing use of AI components in vehicles and transportation is leading us down a path where the computer makes decisions and we humans have to blindly trust the AI and live with those decisions. Explainable AI is increasingly becoming an area of great interest to various user groups, such as citizens who want to trust the systems they use, legal entities who are responsible for liability and accountability, and researchers who want to understand the limitations of AI and improve the models. The goal of AITHENA is to research and develop methods to create transparency and interpretability of AI models for use in CCAM solutions.